Good Listener, Difficult Coworker

Unlocking AI Adoption by Adapting to How We Work

AI chat is the most natural interface ever built. The shift it created happened fast and it was genuine. Where we used to query, gather results, and assimilate information ourselves, we now type what we're thinking and the system aggregates, synthesizes, and summarizes for us. No learning curve. No menus to navigate. You just talk (or type).

That simplicity is the superpower. The reason adoption was explosive wasn't that AI was impressive — it's that the user didn't have to learn anything. Every other productivity tool in history required the user to meet the tool on its terms: learn the interface, understand the mental model, figure out the workflows. Chat inverted that. The tool met the user on their terms. You already knew how to have a conversation. That was enough.

But we're starting to give that superpower back.

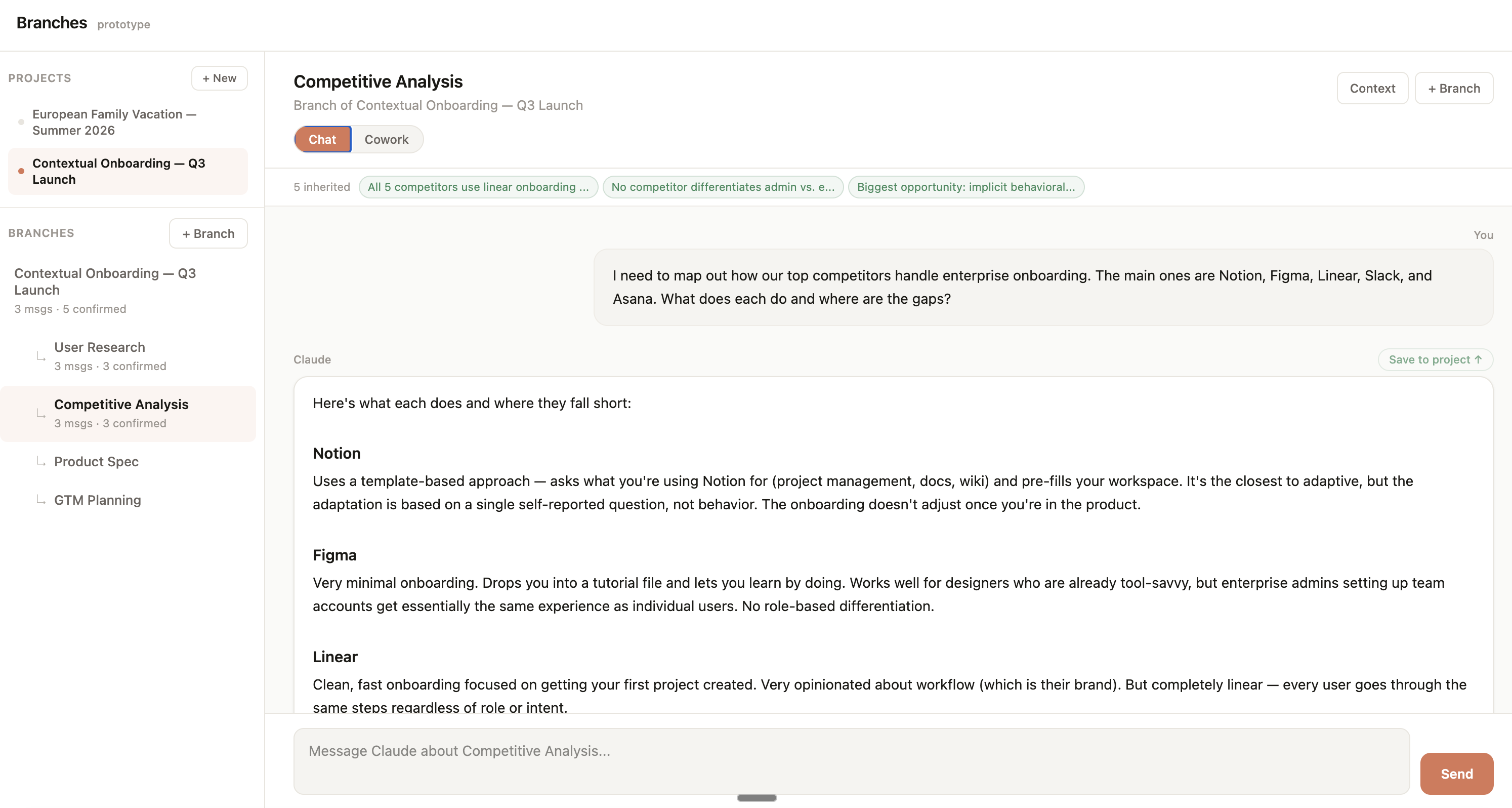

As models have matured — agentic execution, tool use, multi-step reasoning, artifact production, extended context — the product surfaces built around them have started to fragment. Today, I'm going to pick on Claude (only because I'm a fan and avid user), but this issue is more pervasive. Claude has chat for conversation, Cowork for autonomous tasks, Projects for persistent context, and Code for terminal-based development. Each is powerful. Each solves a real problem. And each asks the user to learn something new.

Where do I go to get work done? Should this be a chat or a Cowork task? Should I start a project, or is this a one-off conversation? How do I get the output from Cowork into my project? Will this conversation remember what I discussed in that one?

These are routing decisions. And every routing decision is a step backward on the original promise. It asks the user to understand the system's internal architecture in order to use it effectively. The user has to move to the tool instead of the tool moving to the user.

I've used Claude as my primary working tool for over a year while building Crib Equity, for product prototyping, market research, investor presentations, financial modeling, competitive analysis, and long-form writing. Outside of work, I've used it for everything from trip planning and home projects to family meal planning.

Across all of these, a pattern has been emerging. I regularly find myself uncertain whether something should be a chat, a Cowork task, or a project conversation. Often, I start in one place, realize mid-stream it should be another, and lose context in the transition.

If that's my experience as someone who uses Claude daily across a wide range of tasks and product surfaces, I suspect most users aren't even getting far enough to have the routing problem. They stay in chat, use a fraction of what's available, and never discover what the models can actually do for them. When I talk to people about how I use AI tools, the most common response is some version of "I had no idea it could do that" or "how do you actually get it to do that?" The capability gap isn't between what models can do and what users want, it's growing between what models can do and what users know to ask for.

Why This Matters Commercially

This matters commercially because it defines where AI adoption stalls.

There's a useful distinction between products that are vitamins and products that are medicine. Vitamins promise optimization and compete with entertainment. Medicine is a necessity. It competes with a salary line, an assistant you'd otherwise hire, a consultant you'd otherwise pay. That's a completely different willingness-to-pay conversation. Claude can be both, but surface fragmentation risks adding friction that keeps users from reaching medicine territory. The user who stays in chat never sees Claude produce a research deliverable autonomously. The user who tries Cowork without project context never sees Claude respond with real awareness of their situation. The capabilities exist. The pathways to discovering them don't (yet).

As we add more capability, we need to be especially careful not to leave users behind in accessing it. At this point the transition from vitamin to medicine is no longer about better models or more features. The transition happens when the product stops asking users to figure out how to access what the models can do, and instead brings those capabilities to the user through a frictionless interface they already know.

Mode Fluidity

More features are coming, but that isn't the fix. It's about adapting these tools not only to how we communicate, but to how we work.

First, through mode fluidity. Chat and agentic work don't need to be separate products requiring an upfront choice. They can be ends of a spectrum the user moves between naturally. You're talking through a problem, and at some point you say "can you go pull that together for me?" Claude can do this work independently — research, comparison, artifact production — and deliver the output back into the conversation. The shift from dialogue to delegation is implicit in the request, not the product surface.

Think about how we've adapted to work with our human colleagues. You don't formally switch from "discussion mode" to "delegation mode." You just talk, and at some point you ask them to go do something. They do it and come back. The interaction is fluid because they've figured out the right level of autonomy from context. AI and agentic products can work the same way. Reliably detecting user intent to delegate versus discuss is exactly the kind of nuanced intent classification that recent advances in reasoning and instruction-following have made viable.

Of course, there are good reasons chat, Cowork and Code are separate surfaces today, ranging from cost management, safety, product legibility, among others. Agentic work burns more tokens, has a different risk profile, and was easier to launch, price, and measure as a distinct product. But those are infrastructure and go-to-market constraints, not user-experience principles.

There's also a strong case for the separation on UX grounds: distinct surfaces create mental models. I know when I'm going to my engineer versus my operations lead and carry a framework for what to expect and what to ask for. Separate modes give users that same clarity. But, that's how human organizations work precisely because humans are specialized and can't do everything. The whole premise of AI chat was that the user doesn't need to know the org chart. Asking them to rebuild that routing intuition for AI surfaces reintroduces the exact overhead that made chat revolutionary to remove.

The separation was right for launch. Over time, the boundary can dissolve as the product develops. Internal controls can govern token-budget guardrails, confirmation prompts for expensive operations, and visual state changes during autonomous work. These all can make fluidity safe and economically sustainable. The user-facing experience becomes one continuous conversation. The infrastructure boundaries stay. The interaction boundary goes away again.

Context That Flows With Work

The second part is context that flows with work. Today, Projects give conversations shared context such as documents, instructions, and reference material. This was an important step. But that context only flows one direction: in. Decisions and outputs produced inside a conversation stay trapped there unless you manually extract and re-upload them.

This creates two failure modes that anyone doing complex work in Claude hits quickly. You can split work across separate conversations, but each starts partially blind, and you bear the context switching cost to re-establish context that already exists somewhere else in your project. Or you can keep everything in one conversation, but both context windows degrade. Claude's context window fills with noise from interwoven topics, and the user's context window gets similarly taxed needing to scroll through a long tangled chat, find what they need, remember what's been decided. The user becomes the project manager, manually shuttling context between threads.

The natural evolution is bidirectional context. Claude auto-identifies decisions and outputs within a conversation and suggests promoting them to the project level, where they become immediately available in every other conversation within that project. The user confirms or dismisses with a single interaction, and can also manually promote anything on demand. This keeps the signal high without asking the user to do project management work. Context flows up from conversations to the project as naturally as it flows down from the project to conversations. And the value compounds as models improve: cross-conversation synthesis and reasoning across context to spot conflicts and make connections the user hasn't yet. This will turn promoted context from a convenience into a capability multiplier.

Both of these changes serve the same principle: the product should adapt to the user, not the other way around. The natural language interface solved the interaction barrier by meeting users where they are. These changes similarly solve the workflow barrier by having the product handle routing, context management, and execution on behalf of the user, rather than asking them to understand how the system works.

And the timing matters. Today's models have crossed the threshold where these product changes unlock real value and they'll become more valuable as capabilities continue to advance. The bottleneck is no longer what AI can do. It's whether the product makes that capability accessible.

The superpower of AI chat was asking nothing of the user. As models grow more capable and the product grows more complex, that should remain the foundational product primitive. Can the user access this without learning anything new?

A Working Prototype

I built a working prototype (using Claude Code — source on GitHub) to test whether the interaction model for context promotion holds up in practice. It demonstrates project-level context inheritance, auto-suggested promotion of decisions from conversations back to the project, editable context at every level, and live Claude API integration where responses are informed by inherited project context. If you're interested to give it a try, drop me a note at hello@bluehour.run for demo access.